Clinical Researcher—April 2026 (Volume 40, Issue 2)

PEER REVIEWED

Tony Succar, PhD, MScMed(OphthSc); Karen Manrique, MS; Apurva Uniyal, MA, MS; Gordon Wimpress, BA, BSc; Amelia Spinrad, MS; Annie Ly, MS, MD Candidate; Eunjoo Pacifici, PharmD, PhD

Investigator-initiated trials (IITs) are essential for driving innovation and advancing clinical research. However, these studies often lack structured monitoring and quality oversight, potentially compromising data integrity and regulatory compliance. While Good Clinical Practice (GCP) trainings exist, many are too broad, costly, or inaccessible, leaving academic investigators and their staff without practical tools to ensure trial quality.

To address this gap, we developed the Clinical Trial Quality Training Series, three self-paced modules (Monitoring, Auditing, and Inspection Readiness) that are guided by an implementation science framework. The framework informed all stages of development: exploration, installation, initial implementation, full implementation, and sustainability/innovation.

A pilot study auditing five IIT sites revealed common deficiencies in informed consent documentation, Health Insurance Portability and Accountability Act (HIPAA) compliance, data traceability, and staff training records, reinforcing the need for targeted training. Since launch, the modules have been accessed more than 90,000 times by learners in 91 countries across all six World Health Organization (WHO) regions.

Adoption indicators include integration into institutional onboarding programs and issuance of 917 digital badges for module completion. End-of-course surveys show high satisfaction, with more than 92% of users rating modules 4 or 5 out of 5. Feedback highlights the benefits of practical case studies, accessibility, and career development.

Applying an implementation science framework enabled broad dissemination and sustained engagement with these modules, addressing critical gaps in IIT quality oversight. This scalable, evidence-based educational program equips research teams to strengthen monitoring practices, ensure regulatory compliance, and improve the translatability of academic research.

Understanding IITs and Their Performance Issues

IITs play an important role in the clinical trial ecosystem, enabling academic and clinical investigators to address innovative hypotheses that could advance the development of new therapeutic products, explore new uses for existing products, or improve health outcomes.{1} Unlike industry-sponsored trials, which prioritize regulatory approval and commercialization, IITs often pursue scientific questions with limited commercial potential. However, the impact and generalizability of these studies are frequently compromised by variability in trial quality and operational rigor.{2}

A major contributor to this challenge is the lack of structured monitoring and quality oversight, which are standard in industry-sponsored studies but rarely implemented in academic settings due to resource constraints. Accordingly, the availability of accessible, cost-free training resources is essential to support academic investigators in embedding quality principles throughout the clinical trial lifecycle, particularly within resource-limited environments.

Education is a vital component in promoting quality in clinical trial conduct.{3} Recent work has described the development and evaluation of the CONSCIOUS II curriculum, designed for PhD students and early-career researchers managing IITs. This program integrates asynchronous study materials with a three-month pilot course, resulting in improved skills and positive feedback on the quality of the trials.{4}

Similarly, another initiative introduced structured, quality-by-design studios within an academic health center. Findings showed that this approach optimized protocol development and improved principal investigator engagement in critical-to-quality elements for clinical research. A Clinical Investigator Training Program for early-career clinicians included lectures, group exercises, and protocol development. Training participants showed significantly improved test scores and increased principal investigator activity.{5}

A European survey reviewed national training activities for IITs. It identified diverse educational offerings and suggested the systematic sharing of curricula and core competencies across countries to further optimize protocol quality and the skills of trial personnel.{6–8}

Effective oversight—through systematic monitoring and auditing—is critical for ensuring data integrity, participant safety, and regulatory compliance. However, most IITs lack dedicated monitoring personnel, standardized processes, and comprehensive training programs.

Our previous findings indicate that existing GCP resources, although widely available, are often too broad, costly, or inaccessible to meet the practical needs of academic investigators.{9–11} Moreover, only about 65% of IITs undergo monitoring, and research professionals frequently report the need for additional training to build monitoring competencies.{9} These gaps heighten the risk of protocol deviations, data errors, and regulatory noncompliance, ultimately threatening trial validity.{12}

Tackling the Challenges of Effective IIT Training

Addressing these challenges requires innovative, accessible educational solutions tailored to the realities of academic research. Self-paced, online learning modules offer flexibility and scalability, overcoming barriers such as time constraints and geographic limitations. To maximize effectiveness, such interventions should be informed by implementation science—a discipline focused on promoting the systematic adoption of evidence-based practices into routine settings.{13}

The Consolidated Framework for Implementation Research (CFIR) and the Exploration, Preparation, Implementation, Sustainment (EPIS) model provide a structured approach to understanding and addressing factors that affect intervention uptake. These encompass individual, organizational, and contextual determinants that could impact behaviors and program integration. For example, the CFIR outlines key domains for implementation, including intervention characteristics, inner setting (organizational culture and capacity), outer setting (external policies and incentives), characteristics of individuals, and the implementation process itself.{14}

In the remainder of this article, we describe the development and global dissemination of the aforementioned Clinical Trial Quality Training Series by the authors.

Methods

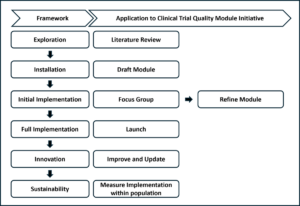

We applied an implementation science framework to guide the development and dissemination of the Clinical Trial Quality Training Series. Activities were organized into stages: exploration, installation, initial implementation, full implementation, innovation, and sustainability (see Figure 1).

Figure 1: Stages of Implementation Science

Overview of how the implementation science framework was applied to the creation of the self-paced training modules.

Exploration

To assess quality gaps in IITs and inform module design, we conducted literature reviews and stakeholder surveys, followed by a pilot study at the University of Southern California (2019–2020). Five IITs were stratified into low-, medium-, and high-risk categories based on predefined factors, including investigator experience, study complexity, and involvement of vulnerable populations, consistent with National Institutes of Health risk-based monitoring guidance.{15}.

Monitoring visits reviewed regulatory and subject binders proportionally to risk level (10–20% for low risk, 20–50% for medium risk, and 50–100% for high risk). Four domains were evaluated: informed consent/HIPAA documentation, regulatory records, data verification, and staff training compliance.

Installation

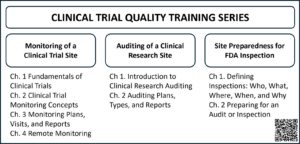

Three self-paced modules—Monitoring, Auditing, and Inspection Readiness—were developed in accordance with U.S. Food and Drug Administration (FDA) regulations, International Council for Harmonization Good Clinical Practice guidelines, and real-world case studies (e.g., FDA Warning Letters) (see Figure 2).

Figure 2. Modules

Listed here are the chapters for each of the monitoring, auditing, and inspection readiness modules, along with QR code to access the courses.

Content creation involved drafting scripts, designing visual slides, and recording narrated transcripts to enhance engagement. Modules were produced in Articulate Storyline® and hosted on Moodle®, supplemented with downloadable templates, checklists, and reference materials.

Initial Implementation

Focus groups (15–25 participants from clinical research units and the Southern California Clinical and Translational Science Institute [SC-CTSI]) evaluated usability, relevance, and effectiveness. Feedback was collected via structured surveys and discussion sessions, informing revisions such as additional case studies, clearer explanations of monitoring principles, improved chapter organization, and integration of certificates and feedback mechanisms.

Full Implementation

Finalized modules were disseminated through institutional channels, professional networks, and conferences (e.g., Association of Clinical Research Professionals, Trial Innovation Network).{3} Launch dates were November 2018 (Monitoring), September 2021 (Auditing), and May 2024 (Inspection Readiness). Adoption indicators included integration into onboarding programs and issuance of digital badges for module completion.

Innovation and Sustainability

Modules undergo continuous improvement based on user feedback and evolving regulatory requirements. Updates include new content (e.g., remote monitoring chapter added during COVID-19) and technical enhancements. A dedicated team monitors platform analytics and user comments to ensure relevance and long-term impact.

Results

Exploratory

Some of the activities related to the exploratory stage, including literature reviews and stakeholder surveys, had already been conducted.{9} In this report, a pilot study was performed to assess quality gaps in the conduct of IITs. Results confirmed significant quality gaps in IIT conduct.

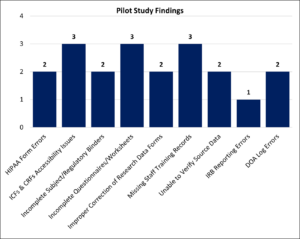

As previously noted, across five audited sites, deficiencies were observed in informed consent documentation (3/5), HIPAA compliance (2/5), data traceability (3/5), and staff training records (3/5). Regulatory documentation gaps were noted in two studies. Findings were more frequent in medium- and high-risk trials, averaging four and five issues per study, respectively, compared to two in low-risk trials. These results underscore the need for structured, risk-informed oversight and informed the design of the training modules (see Figure 3).

Figure 3: Pilot Study Findings

Shown here are the deficiencies identified during the monitoring of five IITs. ICFs=informed consent forms; CRFs=case report form; IRB=institutional review board; DOA=delegation of authority.

Installation

Development of the three modules spanned January 2016 to May 2024. The monitoring module required 23 months, auditing 32 months, and inspection readiness 16 months. The COVID-19 pandemic delayed the auditing module and prompted the addition of a remote monitoring chapter. Each module incorporated case studies based on FDA Warning Letters, interactive quizzes, and downloadable resources such as templates and checklists. Module durations were 227 minutes (Monitoring), 91 minutes (Auditing), and 61 minutes (Inspection Readiness).

The lessons are produced in an application called Articulate Storyline. The Storyline player includes several accessibility features. Firstly, navigating the player controls is logical and easy to do with a keyboard, using arrow keys or the tab button. One of those controls is the Closed Captioning on/off button. The captions in each lesson are transcribed from the script to ensure accuracy. The player also includes several keyboard shortcuts that can be toggled on and off, including shortcuts for play/pause, mute/unmute audio, replay slide, next slide, toggle full screen, and more.

Initial Implementation

Focus groups of 15–25 participants evaluated usability and relevance. Feedback led to enhancements such as expanded case studies, clearer explanations of monitoring principles, improved chapter organization, and integration of certificates and feedback systems. Additional sessions were held when major revisions were introduced to confirm alignment with user needs.

Full Implementation

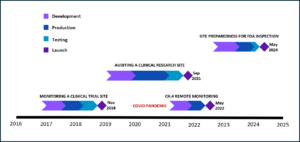

The first in the quality series, the Monitoring module, was launched in November 2018, followed by the Auditing module in September 2021, and the Inspection Readiness module in May 2024. As shown in Figure 4, each module launch was preceded by development, production, and testing and refinement stages. Since their launch, more than 90,000 cumulative views have been recorded.

Figure 4: Module Development Timeline

Shown here are the timelines for the creation of each of the Monitoring, Auditing, and Inspection Readiness modules, including the addendum for remote monitoring.

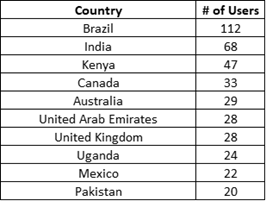

The geographical distribution of learners shows the modules’ broad reach across 91 countries and territories. Outside the U.S., the country with the most learners was Brazil, followed by India, Kenya, Canada, Australia, and the United Arab Emirates (see Table 1).

Table 1: Top 10 non-U.S. Countries for Clinical Trial Quality Training Series Access

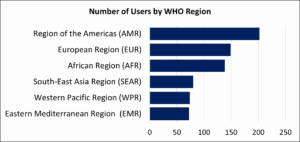

Countries were categorized according to the WHO’s regional classification, which defines six regions: Africa, the Americas, the Eastern Mediterranean, Europe, South-East Asia, and the Western Pacific.{16} According to this analysis, the region (excluding the U.S.) with the largest number of users was the Americas, followed by Europe, Africa, South-East Asia, Western Pacific, and Eastern Mediterranean, with modules reaching users across all WHO-defined regions (see Figure 5 and Table 2).

Figure 5. Number of Users by WHO Region, Excluding the U.S.

Table 2: Users in WHO Regions—Country Breakdown, Excluding the U.S.

In November 2022, a reward system was created to issue digital badges to users who complete an entire module, and a special badge to those who complete the whole series. These badges can be used to demonstrate competencies in online resumes, profiles, and communications. As of September 9, 2025, 917 badges had been awarded.

Innovation and Sustainability

Continuous updates to the modules ensure their relevance and compliance with evolving regulations. Two years after launch, the Monitoring module was updated with a new chapter on remote monitoring to address needs arising from the COVID-19 pandemic (see Figure 4). The user feedback mechanism built into the platform has guided further improvements addressing technical and content issues.

End-of-course surveys indicate high satisfaction: 92.6% of Monitoring module users (n=593), 93.7% of Auditing module users (n=284), and 95.5% of Inspection Readiness module users (n=111) rated the modules 4 or 5 out of 5. Qualitative feedback emphasized engaging narration, practical case studies, and career development benefits. Users were also given opportunities to comment through the end-of-course survey’s open-response field and in discussion forums. Overall, the feedback was highly favorable, with 86 of 134 comments underscoring the quality of the modules and conveying strong appreciation.

When asked how they discovered the modules, most of the 987 responding learners indicated that they did so through colleagues (47.8%), followed by e-mail (10.9%), onsite symposium (6.8%), and SC-CTSI event (3.6%). About a third of the users discovered the modules through other means, which included internet searches (Google®, YouTube®, and Reddit®) and institutional recommendations (examples: Eastern Michigan University, Latin America Oncology Cooperative, USC Alzheimer’s Therapeutic Institute, Cleveland Clinic, Oklahoma University Health Science Campus, and Abu Dhabi Department of Health).

Discussion

Our findings demonstrate that applying an implementation science framework to the development and dissemination of self-study modules can significantly enhance their adoption and sustainability in academic clinical research. These results support the effectiveness of the framework,{17} and online education modules as demonstrated in other settings.{18–22}

The pilot study results reinforce the need for structured, risk-informed monitoring in IITs. Deficiencies in informed consent documentation, HIPAA compliance, and data traceability highlight vulnerabilities that could compromise trial validity and participant safety. By addressing these gaps through targeted training, the modules equip research teams with practical tools to implement quality oversight, thereby reducing regulatory risk and improving data integrity.

The wide reach of this initiative and the positive feedback we have received suggest that we are meeting the needs of clinical trial professionals both domestically and internationally. The modules’ design and contents ensure users are trained in protocol adherence, data integrity, safety reporting, and regulatory requirements—all of which are critical to upholding trial quality and protecting participant rights. The flexibility and accessibility of these online modules received many positive comments.

Measuring an implementation science framework’s effectiveness involves evaluating such program outcomes as feasibility (can it be done in the real setting?), fidelity (is the training delivered as planned?), acceptability (do trainees find it relevant and appropriate?), reach (how many people are using it, and at what levels?), and sustainability (can it last long-term?). These should be considered alongside such training outcomes as knowledge gain via pre/post-tests and linked to desired results, using specific metrics and tools to understand how and why the training succeeded or failed in practice, not just whether people liked it. Key steps include assessing core components (intervention, context, process) and using mixed-methods (interviews, surveys, observation) across different levels (individual, organizational) to determine whether the training led to sustained, long-term use of learned skills in practice.{23}

The global reach of the Clinical Trial Quality Training Series—with more than 90,000 views across 91 countries—underscores the scalability and relevance of this approach. Integration into institutional onboarding programs and issuance of digital badges further indicate organizational acceptance and long-term utility. Our results also demonstrated significant uptake in regions of the world where there is a scarcity of clinical trial and regulatory science educational training programs, for example, in Latin America.

The main pedagogical approaches for the self-study modules include self-directed learning, learner-centered design, and technology to ensure independent learner engagement, mastery of material, and better learning outcomes.{24-30} Such approaches to implementation science as the Consolidated Framework for Implementation Research (CFIR) and the Exploration, Preparation, Implementation, Sustainment (EPIS) framework emphasize constructs such as acceptability, feasibility, fidelity, and sustainability,{31–34} which are crucial for assessing whether training modules effectively improve trial monitoring practices.

Our evaluation aligns with these principles: high satisfaction scores (>92% rating modules 4 or 5), positive qualitative feedback on usability and relevance, and evidence of sustained engagement through continuous updates and institutional integration. These outcomes suggest that the intervention achieved both educational and implementation objectives.{35} Utilizing such frameworks facilitates stakeholder engagement, iterative refinement of educational content based on feedback, and accommodation of varying institutional contexts, collectively enhancing the likelihood of successful implementation and sustainability.{36}

The flexibility and accessibility of online, self-paced modules were critical to success, particularly in resource-limited settings where traditional training is scarce. Feedback highlighted practical case studies, engaging narration, and career development benefits, reinforcing the value of learner-centered design. Similar studies have reported that self-directed online learning improves academic performance and professional competencies, supporting our approach. These findings align with previous reports highlighting gaps in structured quality oversight and monitoring systems for academic IITs.{37}

Dissemination occurred primarily through word of mouth rather than active marketing. This success was supported by the modules’ fully transcribed and narrated format, which allows learners to absorb the content without viewing slides. Designed for modern, podcast-style learning, the modules enable users to listen on the go, making them accessible to those with limited desktop access. They are also smartphone-compatible, ensuring flexibility and convenience for diverse learning environments.

Despite these strengths, limitations remain. The modules are currently available only in English and require internet access, which may restrict use in some regions. Future strategies include developing hardcopy versions, enabling AI-driven translation, and expanding content to address global regulatory variations. Additionally, while anecdotal evidence suggests a positive impact on monitoring practices, formal assessments of competency gains and behavioral changes are needed to confirm long-term effectiveness. Additional plans include developing an interactive portal for advanced training and on-demand regulatory consulting, expanding modules to cover emerging technologies and advanced therapies.

Optimizing IIT quality through accessible, evidence-informed education has implications beyond academia. High-quality trials generate reliable data that inform clinical guidelines, regulatory decisions, and health policy, ultimately improving patient care. By embedding implementation science principles into educational design, this initiative offers a scalable model for strengthening research quality worldwide.

Disclaimer

The authors used Copilot, an AI-assisted editing tool, to improve spelling, grammar, clarity, and readability during manuscript preparation. After using this tool, the authors carefully reviewed and edited the text and take full responsibility for the final content.

Conflict of Interest

The authors report no conflicts of interest.

Funding Statement

This work was supported by grants UL1TR001855 from the National Center for Advancing Translational Science (NCATS) of the U.S. National Institutes of Health. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

References

- Orechwa AZ, et al. 2025. Protocol for Applying an Enhanced Quality-by-Design Program Across the Translational Science Spectrum. Journal of Clinical and Translational Science. doi:10.1017/cts.2025.10200

- Lv W, et al. 2022. Panoramic quality assessment tool for investigator initiated trials. Front Public Health 10:988574. doi:10.3389/fpubh.2022.988574

- Succar T, Terteryan A, Manrique K, Pacifici E. 2024. Optimizing Clinical Trial Education in Academia. Clinical Researcher 38(5). https://acrpnet.org/2024/10/22/optimizing-clinical-trial-education-in-academia

- Rychlíčková J, et al. 2025. Enhancing pragmatic competence in investigator-initiated clinical trials: structure and evaluation of the CONSCIOUS II training programme. BMC Med Educ 25(1):502. doi:10.1186/s12909-025-07054-5

- Moradi H, Schneider M, Streja E, Cooper D. 2021. Feasibility and acceptability of a structured quality by design approach to enhancing the rigor of clinical studies at an academic health center. J Clin Transl Sci 5(1):e175. doi:10.1017/cts.2021.837

- Saleh M, et al. 2020. Clinical Investigator Training Program (CITP) – A practical and pragmatic approach to conveying clinical investigator competencies and training to busy clinicians. Contemp Clin Trials Commun 19:100589. doi:10.1016/j.conctc.2020.100589

- Magnin A, et al. 2019. European survey on national training activities in clinical research. Trials 20(1):616. doi:10.1186/s13063-019-3702-z

- Vermeersch K, et al. 2019. Challenges and threats of investigator-initiated multicenter randomized controlled trials: the BACE trial experience. International Journal of Clinical Trials 6(4):175–84. doi:10.18203/2349-3259.ijct20194652

- Spinrad A, et al. 2019. 3080 Ensuring Quality in Investigator-Initiated Clinical Trials through Monitoring Concepts Training. Journal of Clinical and Translational Science 3(s1):117. doi:10.1017/cts.2019.267

- Ly A, Pacifici E, Pire-Smerkanich N, Spinrad A, Xie A. 2018. Improving the Quality of Clinical Trials in Academic Research Facilities: Developing Training Modules Utilizing an Implementation Science Framework. Southern California Regional Dissemination, Implementation & Improvement Science Symposium (Los Angeles, Calif.).

- Xie A, Ly A, Spinrad A, Pacifici E, Pire-Smerkanich N. 2018. Exploring the Gaps in Quality Control of Clinical Trials. University of Southern California Moving Targets 2018 Conference (Los Angeles, Calif.).

- Konwar M, Bose D, Gogtay NJ, Thatte UM. 2018. Investigator-initiated studies: Challenges and solutions. Perspect Clin Res 9(4):179–83. doi:10.4103/picr.PICR_106_18

- Eccles MP, Mittman BS. Welcome to Implementation Science. Implementation Science 1(1):1. doi:10.1186/1748-5908-1-1

- Damschroder LJ, Aron DC, Keith RE, Kirsh SR, Alexander JA, Lowery JC. 2009. Fostering implementation of health services research findings into practice: a consolidated framework for advancing implementation science. Implement Sci 4:50. doi:10.1186/1748-5908-4-50

- National Institute of Mental Health. NIMH Guidance on Risk-Based Monitoring. https://www.nimh.nih.gov/funding/clinical-research/nimh-guidance-on-risk-based-monitoring

- World Health Organization. World Regions According to the World Health Organization. https://ourworldindata.org/grapher/who-regions

- Community Engagement Program: Supporting bi-directional community engagement to improve the relevance, quality, and impact of research. Harvard Catalyst. https://catalyst.harvard.edu/community-engagement/implementation-science/#:~:text=For%20example%2C%20some%20frameworks%20focus,or%20framework%20for%20your%20research

- Succar T, et al. 2013. The impact of the Virtual Ophthalmology Clinic on medical students’ learning: a randomised controlled trial. Eye (Lond) 27(10):1151–7. doi:10.1038/eye.2013.143

- Hoang A, Hepburn SJ, Morawska A, Sanders MR. 2025. The effect of self-reflection on the outcomes of online clinical skills training: a comparative study. Adv Health Sci Educ Theory Pract 30(5):1621–39. doi:10.1007/s10459-025-10425-8

- Al Yazeedi B, Al Azri Z, Shakman L, Al Shidhani S, Al Kharusi M. 2025. Academic Performance and Satisfaction of Student-Directed Learning in Online Education Among Multidisciplinary Undergraduate Students: A Retrospective Comparative Study. SAGE Open Nurs, 11:23779608251365990. doi:10.1177/23779608251365990

- Zheng M, Bender D, Lyon C. 2021. Online learning during COVID-19 produced equivalent or better student course performance as compared with pre-pandemic: empirical evidence from a school-wide comparative study. BMC Med Educ 21(1):495. doi:10.1186/s12909-021-02909-z

- Prabu Kumar A, et al. 2023. E-learning and E-modules in medical education-A SOAR analysis using perception of undergraduate students. PLoS One 18(5):e0284882. doi:10.1371/journal.pone.0284882

- Vroom EB, Albizu-Jacob A, Massey OT. 2021. Evaluating an Implementation Science Training Program: Impact on Professional Research and Practice. Glob Implement Res Appl 1(3):147–59. doi:10.1007/s43477-021-00017-0

- Padwal M, Takale L, Phadke A, Gawade Maindad G, Karmarkar M, Pokale AB. 2025. Self-Directed Learning Strategies: A Comparative Evaluation of Facilitated and Self-Paced Methods. Cureus 17(7):e88982. doi:10.7759/cureus.88982

- Aulakh J, Wahab H, Richards C, Bidaisee S, Ramdass P. 2025. Self-directed learning versus traditional didactic learning in undergraduate medical education: a systemic review and meta-analysis. BMC Med Educ 25(1):70. doi:10.1186/s12909-024-06449-0

- Finn A, Fitzgibbon C, Fonda N, Gosling CM. 2025. Self-directed learning and the student learning experience in undergraduate clinical science programs: a scoping review. Adv Health Sci Educ Theory Pract 30(3):973–1005. doi:10.1007/s10459-024-10383-7

- Sande S, Rajguru MM. 2025. Effectiveness of integrated self-directed learning in the form of case-based learning to promote active learning among second-year MBBS students. J Educ Health Promot 14:208. doi:10.4103/jehp.jehp_683_24

- Robinson JD, Persky AM. 2020. Developing Self-Directed Learners. Am J Pharm Educ 84(3):847512. doi:10.5688/ajpe847512

- Charokar K, Dulloo P. 2022. Self-directed Learning Theory to Practice: A Footstep towards the Path of being a Life-long Learner. J Adv Med Educ Prof 10(3):135–44. doi:10.30476/jamp.2022.94833.1609

- XuL, Duan P, Padua SA, Li C. 2022. The impact of self-regulated learning strategies on academic performance for online learning during COVID-19. Front Psychol 13:1047680. doi:10.3389/fpsyg.2022.1047680

- Cope TL, et al. 2025. Embedding Implementation Science in Clinical Trials: A Framework for Academic-Life Science Partnerships. Pharmaceut Med 39(6):387–95. doi:10.1007/s40290-025-00581-y

- McNamara DA, Lonergan PE, Rafferty P, Fitzpatrick F, Hayes C. 2025. Use of implementation science frameworks to identify core components and sustainability characteristics of a quality improvement learning collaborative. BMC Health Serv Res 25(1):1257. doi:10.1186/s12913-025-13346-9

- Schmitt M, Hawkins M, Florsheim P. 2025. Key determinants in implementation processes: a systematic review using the Consolidated Framework for Implementation Research (CFIR). Implement Sci Commun 6(1):89. doi:10.1186/s43058-025-00712-1

- Smith JD, Polaha J. 2017. Using implementation science to guide the integration of evidence-based family interventions into primary care. Fam Syst Health 35(2):125–35. doi:10.1037/fsh0000252

- Proctor E, et al. 2011. Outcomes for implementation research: conceptual distinctions, measurement challenges, and research agenda. Adm Policy Ment Health 38(2):65–76. doi:10.1007/s10488-010-0319-7

- Nilsen P. 2015. Making sense of implementation theories, models and frameworks. Implement Sci 10:53 doi:10.1186/s13012-015-0242-0

- Gupta A. 2013. Taking the ‘Risk’ out of risk-based monitoring. Perspect Clin Res 4(4):193–5. doi:10.4103/2229-3485.120165

Tony Succar, PhD, MScMed(OphthSc), is an Assistant Professor in the Department of Regulatory and Quality Sciences at the Alfred E. Mann School of Pharmacy and Pharmaceutical Sciences and Director of the Master of Science in Clinical Trial Management program, both at the University of Southern California (USC). He is also the Associate Director of the Regulatory Knowledge and Support Core of the Southern California Clinical and Translational Science Institute (SC-CTSI).

Karen Manrique, MS, is Program Administrator, Regulatory Science Research and Co-Curricular Program, USC Mann Department of Regulatory and Quality Sciences, SC-CTSI.

Apurva Uniyal, MA, MS, is a Regulatory Innovation Research Scientist, The DK Kim International Center for Regulatory Science, Department of Regulatory and Quality Sciences, USC.

Gordon Wimpress, BA, BSc, is a Technical Projects Specialist, SC-CTSI.

Amelia Spinrad, MS, is Associate Director, Global Regulatory Affairs, Gilead Sciences, Foster City, Calif.

Annie Ly, MS, is currently an MD Candidate at the University of Illinois, Chicago.

Eunjoo Pacifici, PhD, PharmD, is Chair and Associate Professor in the Department of Regulatory and Quality Sciences at the Alfred E. Mann School of Pharmacy and Pharmaceutical Sciences, USC.